‘Time changes understanding’ struck me when I read the phrase the other day, in Richard Mabey’s book The Cabaret of Plants. My third edition of a book on organisation design will be published in March 2022. Next month I am speaking to a group about this third edition. Has time changed my understanding of organisation design? I started to think on this.

I fished out the first two editions to take a look. Jumping out, as I flicked the first few pages of edition one was the example of the Miss Army Kit (which I was discussing as an example of product design for a specific audience). It comes with ’15 must-have female emergency items’. At the time you could buy it in pink and purple. It was gone in edition two! (And is a discontinued line).

Time has changed my understanding of gender identification in the workforce, and how that may play out in organisation design. (See the article Redefining Gender at Work: how organisations are evolving).

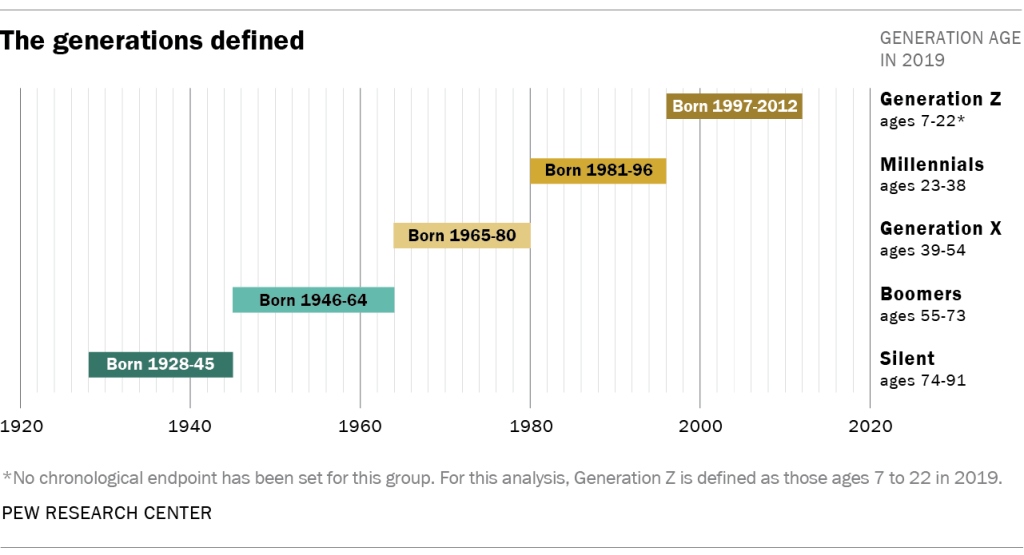

Time has also changed my understanding of other social/societal changes that shape design, including demographic changes, workplace expectations, diversity and inclusion questions, wage and reward differentials, and the meaning and value of work. Thus, the third edition has discussions on diversity, in all its forms, threaded through the text with examples of several organisations’ approaches to diversity and designing. Looking at the indexes, ‘diversity’ does not appear in editions one and two but is there in edition three.

Other macro trends reflect differently across the three editions. If I take the acronym STEEPLE (which appears in all three editions) and covers trends related to social, technological, environmental, economic, political, legal and ethical, then I see a very different range of discussion under each, which reflects the way time changes understanding of the issues. I’ve already mentioned societal trends so, moving on:

Technology, has its own entry in index of the first edition, but by the second edition had become so interwoven with organisational life that there is no specific indexed mention of it, neither in the third edition. But between the second and third editions technologies changed rapidly, as did my understanding of the way technology shapes organisations, for good and bad. This understanding cannot be static as advances keep going. Not in the third edition, but what would be in the fourth edition (no, this is not on the cards), is a discussion of metaverses. Technology in the third edition appears in discussions of digital twins, technologies for remote and virtual working, software as a service (e.g. Salesforce, G Suite, cloud based Microsoft Office 365). None of these were mentioned in the first or second editions.

Take up of all of them has increased rapidly between the second and third editions, rendering significant design changes to the organisations. Designing risk mitigations as part of deployment of these services is a current weak point in design work. When the technologies go down, as Facebook’s did last week organisations can grind to a halt. (The same applies to the design of supply chains). I’m now wondering how to mitigate the many risks associated with these types of technologies, including ransomware attacks, surveillance, hacking, and outages.

Environmental considerations have changed, and are changing, understanding of organisation design – think of the rise of B-corps in the last few years. One of the changes in the third edition is my replacement of ‘mission and vision’ with ‘purpose’, reflecting a shift in my understanding of organisational intent.

Equally, thinking around physical workplace design is changing to accommodate reductions in environmental load related to commuting, resource usage, health and safety. Covid-19, for example, has accelerated thinking and action on touchless workplaces. And just look around to see how many organisations are pledging to be ‘net zero’ (a phrase that makes an entry in the third edition). Think of all the design implications of this pledge.

Last week’s Economist warned of ‘stagflation’, calling it ‘a particularly thorny problem because it combines two ills—high inflation and weak growth—that do not normally go together.’ A couple of weeks earlier (on 21 September), and in relation to gas price rises, Reuters reported: “It is quite clear there is a growing sense of unease about the economic outlook as a growing number of companies look ahead to the prospect of rising costs.” The third edition differs from the previous two in discussing macro-economic trends and the way organisations have to imagine them in advance, prepare for their possible eventuality and then respond to them if they occur.

In the third edition, I have a completely re-written chapter on continuous design, and a completely new section on systems thinking. These two themes permeate the third edition, highlighting how time has changed my understanding of the need to shift focus from project-based organisation design to continuous organisation design done in the context of interlocking and interdependent systems.

Another way the third editions differs from the previous two, is in the discussions on politics. Gareth Morgan’s book ‘Images of Organisation’ has, as one of the eight metaphors, ‘organisations as political systems’, and I am increasingly of the view that organisations are political systems, working within broader political systems. (Look at this week’s announcement that Microsoft is pulling LinkedIn from China). Think at how LinkedIn’s design will be changing to accommodate its pull-out of China decision.

Recognising the power and pull of internal and external politics (with both a lower case and capital ‘p’) is something I have come to understand over time, and perhaps as a result of my years working in the UK Civil Service, as one of the fundamentals to factor into organisation design work. Designers are never working in a political vacuum and I pick this up in the third edition.

Changes to legal and regulatory frameworks also have a major impact on organisation design. For example, the UK’s Trade Act 2021 has impacted businesses and the way they are designed as has the UK’s new immigration rules (effective from January 2021) or the EU-UK Trade and Cooperation Agreement. Again, the third edition pays more attention to the legal and regulatory systems that are material to the design of organisations than in previous editions. (One of the recommendations is that continuous organisation design work should always involve multi-disciplines including legal advisors/experts).

There is less in the third edition than I had room for on ethics in the workplace. But it is an aspect of design that needs close attention as it frequently involves dilemmas in which people have very different views. For example, is it ethical to insist on single source supply and then force the supplier into bankruptcy if demand for the item supplied drops? This example raises a design question related to the boundaries of the organisation. Is the supplier part of your organisation or not (in design terms), and for the supplier is your organisation part of their design?

I’ve outlined a few of the ways that time has changed my understanding of organisation design. Has it changed yours? How? Let me know.

You must be logged in to post a comment.